Prompt Judge: Rubric Grader + Fixes + Rewrite + Tests (1–5 Scores)

Description

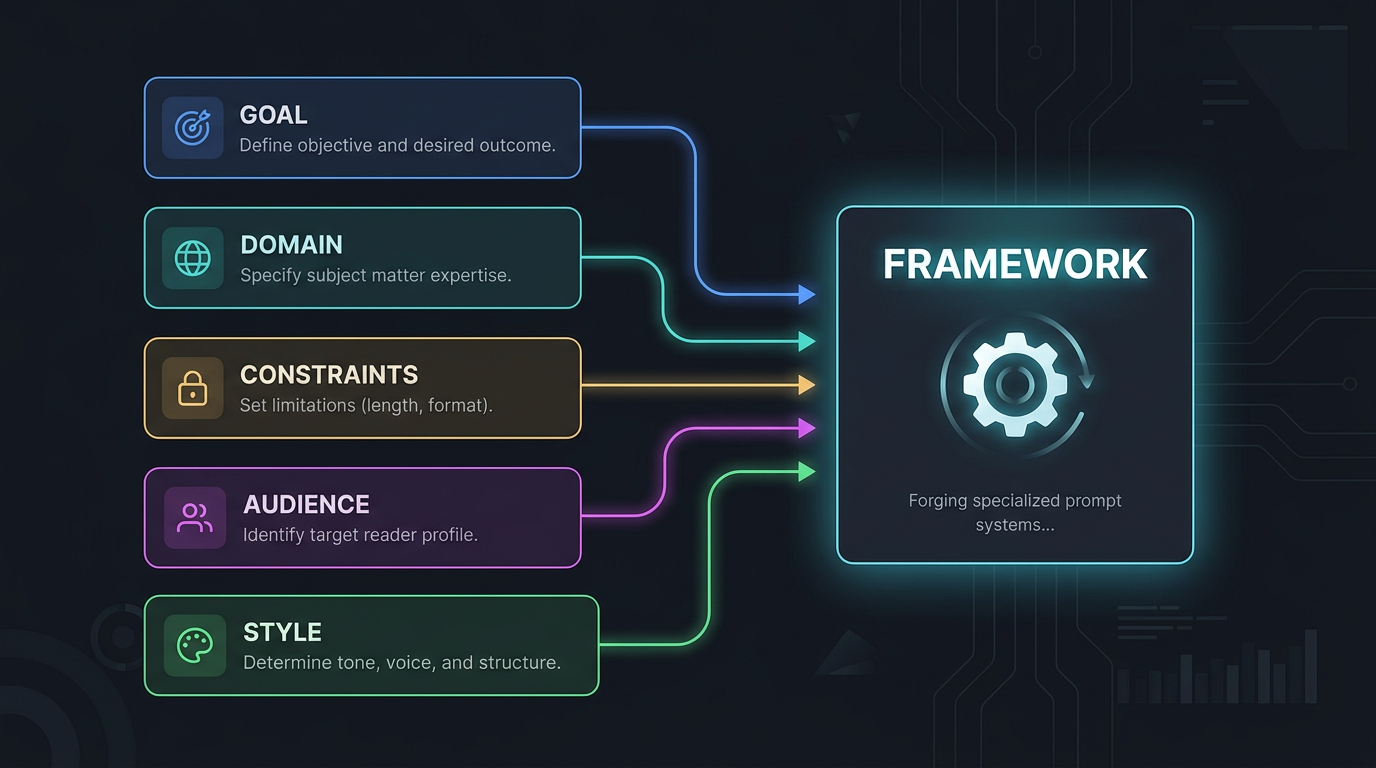

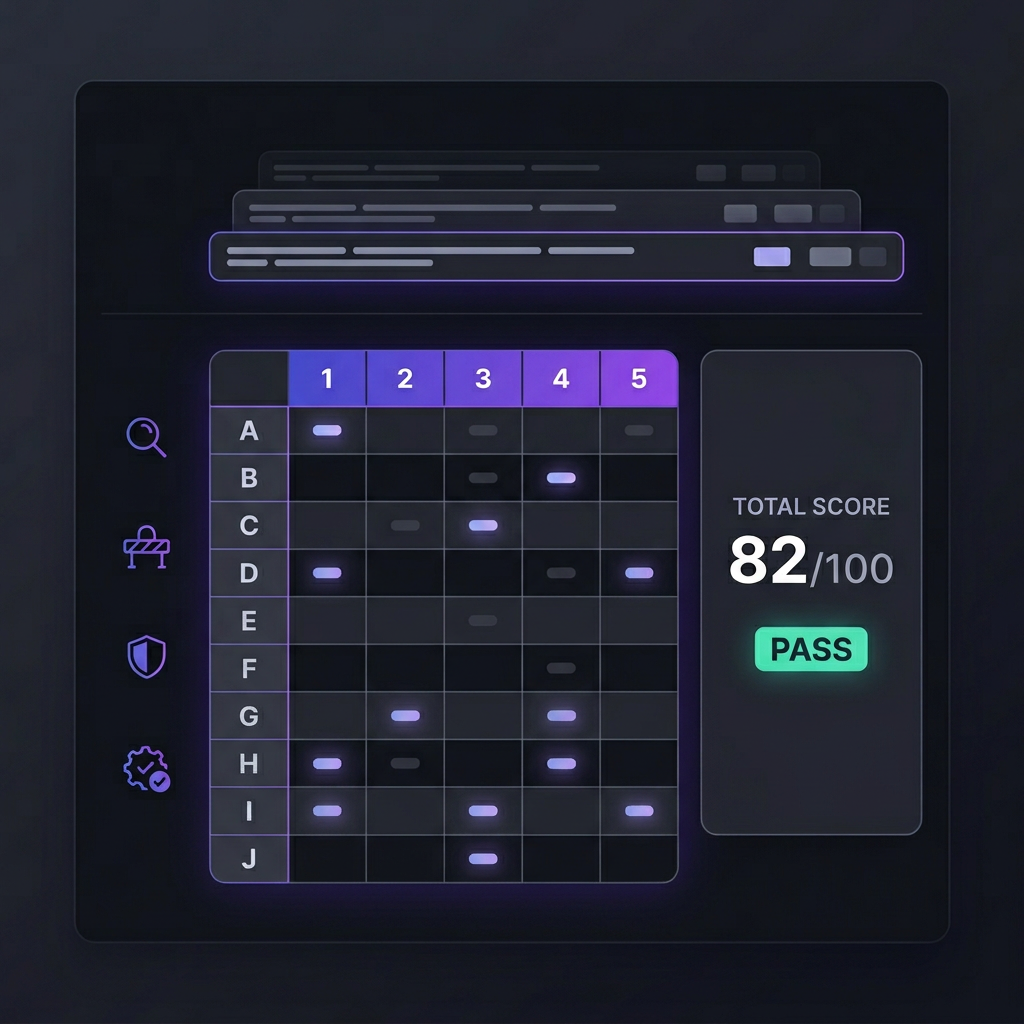

Drop-in overlay that grades your prompts with numeric scores (rubric: clarity, grounding, robustness), prescribes exact edits for weak areas, outputs PROMPT_V2, and generates a test suite. Starts with "Provide A prompt for JUDGEMENT". Works in any LLM.

Usage Examples

**Example 1: Simple rewrite**

User: "Write a blog post about AI."

Overlay → "Provide A prompt for JUDGEMENT"

User: [pastes prompt]

Output: Scores (Clarity:2/5 "Too vague"), Prescriptions ("Add output format: H1+H2+bullets"), PROMPT_V2, 6 tests.

**Example 2: High-risk grading**

User: "Grade this medical prompt: [paste]"

Output: RISK_LEVEL=high → threshold 0.90, stricter weights on grounding/safety, 10 tests.

**Example 3: Batch A/B**

User: "Prompt1 ---PROMPT--- Prompt2"

Output: Side-by-side scorecard, winner recommendation.

Customer Reviews

No reviews yet. Be the first to review!